Big Announcement: Meta MTIA V2 Chips For AI Workloads

By Alexander Johnson - Apr 11, 2024 | Updated On: 11 April, 2024 | 2 min read

By Alexander Johnson , 2 min read - Apr 11, 2024

Updated On: 11 April, 2024

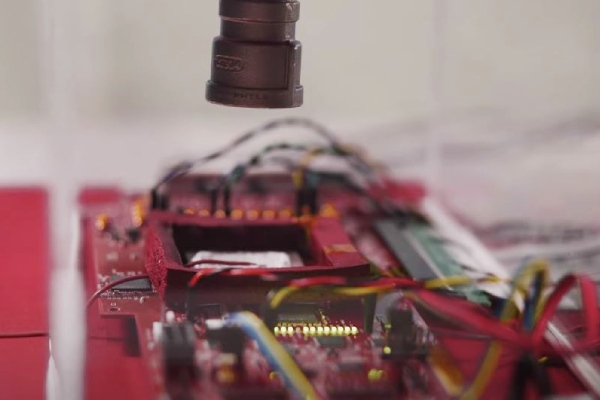

Meta MTIA V2 Chips For AI Workloads. Image Credit: Social Media.

Meta unveiled the Meta Training and Inference Accelerator (MTIA) v1 last year. But, it has upped its game by announcing the next generation of MTIA recently.

Meta MTIA V2 chips for AI workloads are being built with AI in mind. So, let’s find out about the chips and its features.

What Is MTIA?

MTIA is a long-term project that aims to develop the most efficient architecture for Meta’s specific workloads. As AI workloads grow more critical to the products and services, this efficiency will help the company provide global users with the most excellent possible experiences.

Meta MTIA V2 Chips For AI Workloads. Image Credit: Social Media.

Just two days after Google announced its first custom arm-based Axion Processors, Meta released their newest AI chips.

Last year, MTIA v1, Meta’s first-generation inference accelerator, was unveiled. It was created specifically for deep learning recommendation models, improving many experiences across Meta’s apps and platforms.

Meta MTIA V2 Chips For AI Workloads

The next generation of MTIA is part of Meta’s overall full-stack development effort for proprietary, domain-specific silicon that solves their unique workloads and systems.

Meta MTIA V2 Chips For AI Workloads. Image Credit: Social Media.

This new version can double Meta’s previous solutions’ compute and memory bandwidth while maintaining close relations with workloads.

This chip’s architecture is fundamentally concerned with offering the optimal balance of computing, memory bandwidth, and memory capacity for servicing ranking and recommendation models.

The chips have already been deployed in Meta’s data centers and now serve production models. According to the company, the results are mostly positive, allowing them to invest in more computing power for more intensive AI workloads.

On another note, you might like to read about Euan Blair Multiverse.

Features And Specs

Meta MTIA v2 chips for AI workloads consist of an 8×8 grid of processing elements. The PEs give increased dense compute performance, nearly 3.5 times higher than MTIA v1.

Similarly, the new MTIA design features an improved network-on-chip (Noc) architecture. This improvement doubles the bandwidth, allowing the coordination between different PEs at low latency.

Furthermore, by offering more SRAM capacity than regular GPUs, Meta may achieve good utilization when batch sizes are limited. Also, it will deliver enough computing when they encounter higher quantities of potential concurrent work.

Did you notice an error ?

Please help us make corrections by submitting a suggestion. Your help is greatly appreciated!