Analysis Of Intel Gaudi 3 Vs Nvidia H100: The Final Verdict

Intel Gaudi 3 Vs Nvidia H100. Image Credit: Social Media.

In today’s world, where AI is at its peak, two major brands, Intel and NVIDIA, are testing their innovative milestones.

So, this article analyzes Intel Gaudi 3 Vs Nvidia H100, and we will see which chip is best.

Intel Gaudi 3

Let’s examine some of Intel’s best pros and advantages over Nvidia.

Cost-Effective Champion: Intel is still relatively cheap, and most of its products are quite budget-friendly despite their top-tier performance.

This is definitely one of Intel’s most significant advantages over Nvidia. Businesses or people looking for a budget-friendly AI solution will definitely choose Gaudi 3 in the blink of an eye.

Power: Gaudi 3 promises to be more power efficient, lowering the operating costs for data centers.

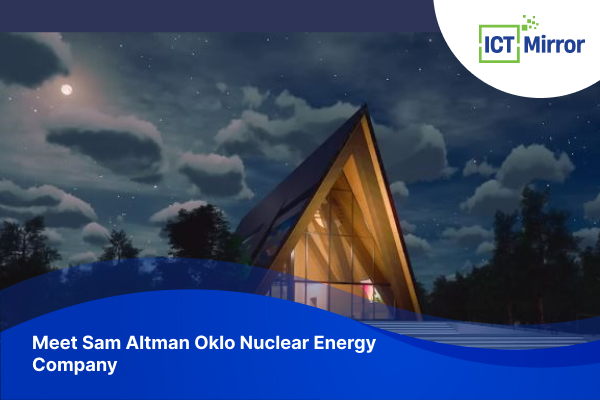

Intel Gaudi 3 Vs Nvidia H100. Image Credit: Social Media.

This is also a significant pro for businesses and data centers since they can save on chips and operating costs.

Inference Advantage: Intel also claims that Gaudi 3 will be able to deliver faster inference speeds, which is perfect for image and speech recognition.

ALSO READ: Big Announcement: Meta MTIA V2 Chips For AI Workloads

Full Specifications of Intel Gaudi 3

| Specs | Info |

| Architecture | Designed specifically for AI training and inference workloads

Eight matrix multiplication engines 64 fully programmable Tensor Processor Cores |

| Supported Data Types | FP32 (single-precision floating-point)

TF32 (TensorFloat-32) BF16 (brain floating-point 16) FP16 (half-precision floating-point) FP8 (reduced-precision floating-point) |

| Memory | High Bandwidth Memory (HBM2e)

Total capacity: 128GB Memory bandwidth: 3.7 TB/s On-board SRAM: 96MB |

| Interconnects | 16 lanes of PCIe 5.0 for high-speed communication |

| Form Factors | OAM (OCP Accelerator Module) HL-325L: High-performance mezzanine card commonly used in GPU-based systems. PCIe Card (availability in Q4 2024) |

| Performance | Claimed performance of 1,835 TFLOPS for FP8 operations |

| Power Consumption | OAM HL-325L: 900W TDP (Thermal Design Power). |

| Other Features | Expected to be compatible with various deep learning frameworks through software tools. |

Table Source: Intel

All of this information is based on publicly available data posted and claimed by intel.

These data might not be accurate since not all performance reports and detailed technical specifications are available yet.

You might like to read about Introducing Google Axion Processors: First Custom Arm-Based DCP

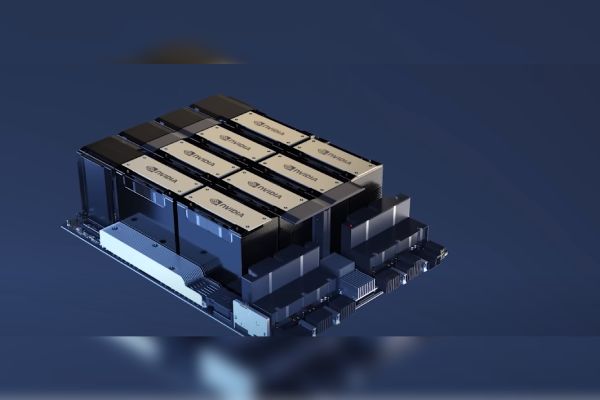

Intel Gaudi 3 Vs Nvidia H100. Image Credit: Social Media.

What Makes Intel Gaudi 3 Special?

- Intel claims their Gaudi 3 chip combines specialized engines, which are efficient AI Calculations.

- Gaudi 3 can complete AI training faster, and it can infer with support for data types.

- Intel Gaudi will have 128GB of high-bandwidth memory, so it can probably handle most, if not all, data sets and complex models.

- Gaudi 3 will also be available in OAM and PCIe formats; therefore, it wil be compatible with multiple factors.

- Intel also claims that its chip will be able to work with a lot of deep learning frameworks.

Nvidia H100

Let’s look at the reasons behind the attention and spotlight on the H100 chip.

Undisputed Performance: Although Intel boasts its features, H100 undoubtedly has exceptional processing power.

Its high performance alone makes it suitable for complex AI training and developing LLM’s.

The Nvidia Ecosystem: Like Apple, Nvidia also works well with their ecosystem.

Since they have a ton of software and hardware, The chip will probably work well with the Nvidia Ecosystem and deliver optimized performance.

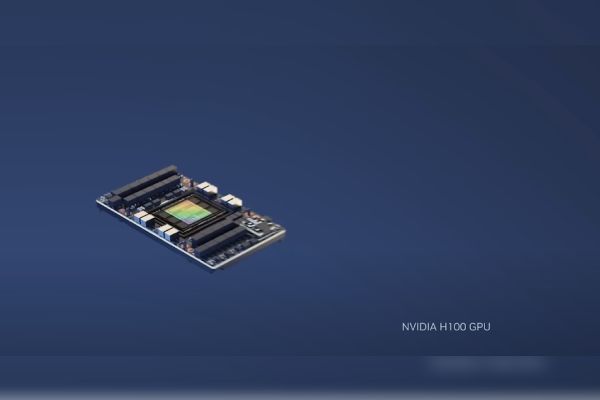

Intel Gaudi 3 Vs Nvidia H100. Image Credit: Social Media.

Future-Proofed Option: Nvidia is on the top and has been hyped for a reason since they unveiled the GB200.

This chip is estimated to be way more potent than H100, and it’s clear that Nvidia will not be no 2 anytime soon and will be on the top for quite some time.

Nvidia H100 Full Specs

| Form Factor | H100 SXM | H100 PCIe | H100 NVL1 |

| FP64 | 34 teraFLOPS | 26 teraFLOPS | 68 teraFLOPs |

| FP64 Tensor Core | 67 teraFLOPS | 51 teraFLOPS | 134 teraFLOPs |

| FP32 | 67 teraFLOPS | 51 teraFLOPS | 134 teraFLOPs |

| TF32 Tensor Core | 989 teraFLOPS2 | 756 teraFLOPS2 | 1,979 teraFLOPs2 |

| BFLOAT16 Tensor Core | 1,979 teraFLOPS2 | 1,513 teraFLOPS2 | 3,958 teraFLOPs2 |

| FP16 Tensor Core | 1,979 teraFLOPS2 | 1,513 teraFLOPS2 | 3,958 teraFLOPs2 |

| FP8 Tensor Core | 3,958 teraFLOPS2 | 3,026 teraFLOPS2 | 7,916 teraFLOPs2 |

| INT8 Tensor Core | 3,958 TOPS2 | 3,026 TOPS2 | 7,916 TOPS2 |

| GPU memory | 80GB | 80GB | 188GB |

| GPU memory bandwidth | 3.35TB/s | 2TB/s | 7.8TB/s3 |

| Decoders | 7 NVDEC 7 JPEG |

7 NVDEC 7 JPEG |

14 NVDEC 14 JPEG |

| Max thermal design power (TDP) | Up to 700W (configurable) | 300-350W (configurable) | 2x 350-400W (configurable) |

| Form factor | SXM | PCIe dual-slot air-cooled |

2x PCIe dual-slot air-cooled |

| Interconnect | NVLink: 900GB/s PCIe Gen5: 128GB/s |

NVLink: 600GB/s PCIe Gen5: 128GB/s |

NVLink: 600GB/s PCIe Gen5: 128GB/s |

| Server options | NVIDIA HGX H100 Partner and NVIDIA-Certified Systems™ with 4 or 8 GPUs NVIDIA DGX H100 with 8 GPUs | Partner and NVIDIA-Certified Systems with 1–8 GPUs |

Partner and NVIDIA-Certified Systems with 2-4 pairs |

| Multi-Instance GPUs | Up to 7 MIGS @ 10GB | Up to 7 MIGS @ 10GB | Up to 14 MIGS @ 12GB each |

Table Source: NVIDIA

As you can see in the specifications, the H100 is packed with power. Based on the specification and performance, Nvidia wins this Intel Gaudi 3 Vs Nvidia H100 matchup by a lot.

However, Intel claims that their chip will be better than NVIDIA H1OO and H200, which still needs to be confirmed since they have not revealed the detailed specifications of their chip.

Check out our article on Best 7 RX/RTX Innovative GPUs In 2024

Intel Gaudi 3 Vs Nvidia H100. Image Credit: Social Media.

What Makes NVIDIA N100 Standout?

- Nvidia’s Tensor Cores are best at handling and accelerating AI workloads with high performance for FP16 and FP32 data types.

- The H100 has HBM2e Memory, which means its data transfer speed is exceptional. However, this might change depending on the H100 model.

- H100 is a top in processing power and can handle nearly all language models and AI training.

- The H100 Tensor Core is designed to work perfectly with Nvidia’s HGX system for high-performance AI infrastructure.

- H100 will also be able to connect with multiple H100 GPUs, which will be handy when handling massive data sets and achieving top performance.

Similarly, you might want to check out Nvidia With Georgia Tech’s 1st AI Supercomputer For Students.

Intel Gaudi 3 Vs Nvidia H100: The Final Verdict

The best chip probably depends on your needs. If you want a cheaper option, energy efficiency, and prioritize inference tasks. Intel Gaudi is definitely the best option for you.

In this matchup of Intel Gaudi 3 Vs Nvidia H100, Nvidia is definitely top in terms of performance, but Intel is keeping up with its features and affordability.

However, if you want the absolute best and best performance you can get your hands on, Nvidia N100 is for you. There will always be contests between these companies and new tech.

However, it’s hard to choose which one is the best since all of them are still being worked on, and they are constantly refining these chips.

Nonetheless, it’s exciting to see this innovation, and both Gaudi 3 and H100 show major improvements and upgrades.

Did you notice an error ?

Please help us make corrections by submitting a suggestion. Your help is greatly appreciated!